ChatGPT has received a major update that improves how users create and edit images. The update is powered by GPT Image 1.5, which follows user instructions more closely and supports both creating new images and targeted edits to uploaded images. This makes ChatGPT more useful for creative and practical image tasks.

Image Creation Gets More Accurate

ChatGPT now uses a new image generation model called GPT Image 1.5, which brings clear improvements to how images are created. Compared to the earlier version, images are generated much faster, allowing users to try more ideas without long waiting times.

The new model also understands instructions better. When users describe what they want, ChatGPT is more likely to produce images that match the request closely. This reduces the need for repeated prompts or corrections, whether it’s a simple illustration or a more involved visual.

Improved Image Editing Experience

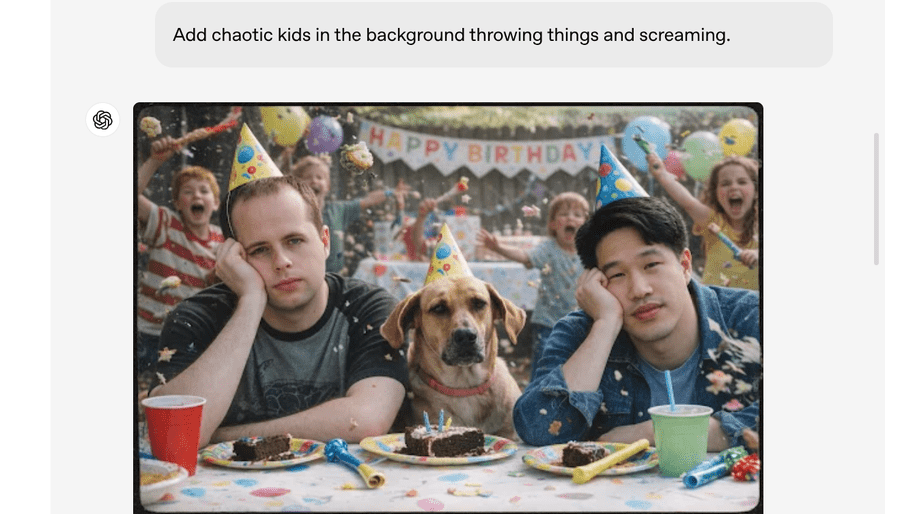

ChatGPT now makes the editing of existing images easier by enabling users to upload a photo and request specific changes. Instead of reworking the entire image, this tool focuses only on the parts you want to edit, making the editing process more controlled and efficient.

With enhanced user instructions, the new image model provides a final result much closer to what was actually requested. At the same time, it retains key details-lighting, background, and the appearance of people-across multiple iterations of edits. This makes it very workable in everyday applications: correcting photos, making design changes, and experimenting with creative ideas without sacrificing image quality.

New Images Section in ChatGPT

ChatGPT now features a dedicated Images area that puts image-related tools into one place. This tab replaces the Library and is available much more quickly via the sidebar, making navigation easier. The Images section includes built-in styles and creative presets to help users get up and running quickly. It also keeps all previously created images in one place, making it easier to manage visuals users might want to reuse.

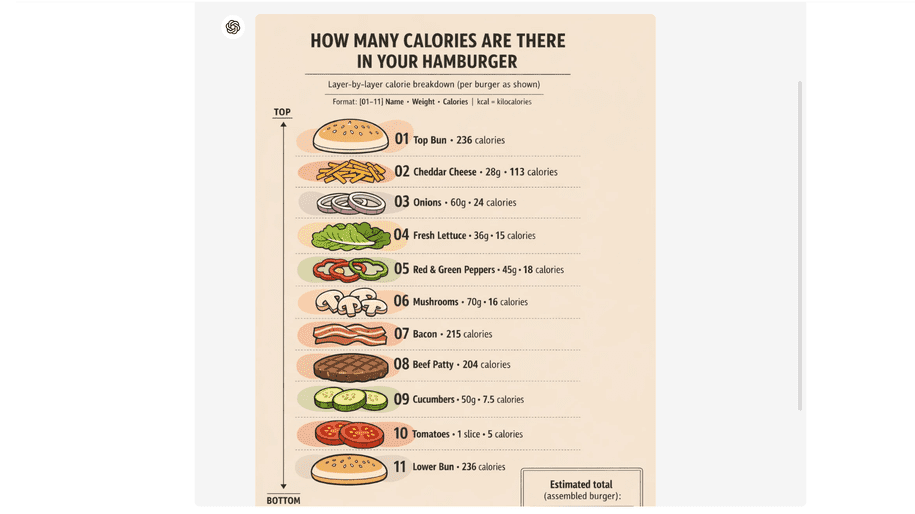

Besides, it is now capable of generating images with more distinct, accurate text. Even the small text comes out sharper, making the whole image more comprehensible and visually well-balanced. This upgrade will definitely allow users to make far better visuals, such as posters, infographics, and promo graphics.

Source link

#ChatGPT #Brings #Major #Update #Image #Creation #Editing

![Your Doctor Is Most Likely Consulting This Free AI Chatbot, Report Says

How would you like it if, when stumped or just in need of some help with an unfamiliar situation, your doctor consulted a free, ad-supported AI chatbot? That’s not actually a hypothetical. They probably are doing that, a new report from NBC News says. It’s called OpenEvidence, and NBC says it was “used by about 65% of U.S. doctors across almost 27 million clinical encounters in April alone.” An earlier Bloomberg report on OpenEvidence from seven months ago said it had signed up 50% of American doctors at the time—so reported growth is rapid.

The OpenEvidence homepage trumpets the bot as “America’s Official Medical Knowledge Platform,” and says healthcare professionals qualify for unlimited free use, but non-doctors can try it for free without creating accounts. It gives long, detailed answers with extensive citations that superficially look—to me, a non-doctor—trustworthy and credible. NBC interviewed doctors for its story, and apparently pressed them on how often they actually click those links to the sources of information, and “most said they only do so when they get an unexpected result,” NBC’s report says.

While it’s free, OpenEvidence is not a charity. It’s a Miami-headquartered tech unicorn with a billionaire founder named David Nadler, and as of January it boasted a billion valuation. NBC says it’s backed by some of the all stars of Sand Hill Road: Sequoia Capital and Andreessen Horowitz, along with Google Ventures, Thrive Capital, and Nvidia.

And its revenue comes from ads (for now), which NBC says are often for “pharmaceutical and medical device companies.” I’m not capable of stress testing such a piece of software, but I kicked the tires slightly by asking Claude to generate doctor’s notes that are very bad and irresponsible (I said it was just a movie prop). ©OpenEvidence When I told OpenEvidence those were my notes and asked it to make sure they were good, thankfully, it confirmed that they were bad, saying in part:

“This clinical documentation raises serious patient safety concerns. The presentation described contains multiple red flags for subarachnoid hemorrhage (SAH) that appear to have been insufficiently weighted, and the current management plan could result in significant harm.” So that’s somewhat comforting. On the other hand, according to NBC: “[…]some healthcare providers were quick to point out that OpenEvidence occasionally flubbed or exaggerated its answers, particularly on rare conditions or in ‘edge’ cases.” NBC’s report also clocked some worries within the medical community and elsewhere, in particular, a “lack of rigorous scientific studies on the tool’s patient impact,” and signs that OpenEvidence might be stunting the intellectual development of recent med school grads: “One midcareer doctor in Missouri, who requested anonymity given the limited number of providers in their medical field in the country, said he was already seeing the detrimental effects of OpenEvidence on students’ ability to sort signals from noise. ‘My worry is that when we introduce a new tool, any kind of tool that is doing part of your skills that you had trained up for a while beforehand, you start losing those skills pretty quickly” At a recent doctor’s appointment, my doctor asked my permission to use an AI tool on their phone (I don’t know if it was OpenEvidence). I didn’t know what to say other than yes. Do I want that for my doctor’s appointment? Not especially. But if my doctor has come to rely on a tool like this, then what am I supposed to do? Take away their crutch? #Doctor #Consulting #Free #Chatbot #ReportArtificial intelligence,doctors,Medicine Your Doctor Is Most Likely Consulting This Free AI Chatbot, Report Says

How would you like it if, when stumped or just in need of some help with an unfamiliar situation, your doctor consulted a free, ad-supported AI chatbot? That’s not actually a hypothetical. They probably are doing that, a new report from NBC News says. It’s called OpenEvidence, and NBC says it was “used by about 65% of U.S. doctors across almost 27 million clinical encounters in April alone.” An earlier Bloomberg report on OpenEvidence from seven months ago said it had signed up 50% of American doctors at the time—so reported growth is rapid.

The OpenEvidence homepage trumpets the bot as “America’s Official Medical Knowledge Platform,” and says healthcare professionals qualify for unlimited free use, but non-doctors can try it for free without creating accounts. It gives long, detailed answers with extensive citations that superficially look—to me, a non-doctor—trustworthy and credible. NBC interviewed doctors for its story, and apparently pressed them on how often they actually click those links to the sources of information, and “most said they only do so when they get an unexpected result,” NBC’s report says.

While it’s free, OpenEvidence is not a charity. It’s a Miami-headquartered tech unicorn with a billionaire founder named David Nadler, and as of January it boasted a billion valuation. NBC says it’s backed by some of the all stars of Sand Hill Road: Sequoia Capital and Andreessen Horowitz, along with Google Ventures, Thrive Capital, and Nvidia.

And its revenue comes from ads (for now), which NBC says are often for “pharmaceutical and medical device companies.” I’m not capable of stress testing such a piece of software, but I kicked the tires slightly by asking Claude to generate doctor’s notes that are very bad and irresponsible (I said it was just a movie prop). ©OpenEvidence When I told OpenEvidence those were my notes and asked it to make sure they were good, thankfully, it confirmed that they were bad, saying in part:

“This clinical documentation raises serious patient safety concerns. The presentation described contains multiple red flags for subarachnoid hemorrhage (SAH) that appear to have been insufficiently weighted, and the current management plan could result in significant harm.” So that’s somewhat comforting. On the other hand, according to NBC: “[…]some healthcare providers were quick to point out that OpenEvidence occasionally flubbed or exaggerated its answers, particularly on rare conditions or in ‘edge’ cases.” NBC’s report also clocked some worries within the medical community and elsewhere, in particular, a “lack of rigorous scientific studies on the tool’s patient impact,” and signs that OpenEvidence might be stunting the intellectual development of recent med school grads: “One midcareer doctor in Missouri, who requested anonymity given the limited number of providers in their medical field in the country, said he was already seeing the detrimental effects of OpenEvidence on students’ ability to sort signals from noise. ‘My worry is that when we introduce a new tool, any kind of tool that is doing part of your skills that you had trained up for a while beforehand, you start losing those skills pretty quickly” At a recent doctor’s appointment, my doctor asked my permission to use an AI tool on their phone (I don’t know if it was OpenEvidence). I didn’t know what to say other than yes. Do I want that for my doctor’s appointment? Not especially. But if my doctor has come to rely on a tool like this, then what am I supposed to do? Take away their crutch? #Doctor #Consulting #Free #Chatbot #ReportArtificial intelligence,doctors,Medicine](https://gizmodo.com/app/uploads/2026/05/Screenshot-2026-05-13-at-8.02.01 PM.jpg)

![Your Doctor Is Most Likely Consulting This Free AI Chatbot, Report Says

How would you like it if, when stumped or just in need of some help with an unfamiliar situation, your doctor consulted a free, ad-supported AI chatbot? That’s not actually a hypothetical. They probably are doing that, a new report from NBC News says. It’s called OpenEvidence, and NBC says it was “used by about 65% of U.S. doctors across almost 27 million clinical encounters in April alone.” An earlier Bloomberg report on OpenEvidence from seven months ago said it had signed up 50% of American doctors at the time—so reported growth is rapid.

The OpenEvidence homepage trumpets the bot as “America’s Official Medical Knowledge Platform,” and says healthcare professionals qualify for unlimited free use, but non-doctors can try it for free without creating accounts. It gives long, detailed answers with extensive citations that superficially look—to me, a non-doctor—trustworthy and credible. NBC interviewed doctors for its story, and apparently pressed them on how often they actually click those links to the sources of information, and “most said they only do so when they get an unexpected result,” NBC’s report says.

While it’s free, OpenEvidence is not a charity. It’s a Miami-headquartered tech unicorn with a billionaire founder named David Nadler, and as of January it boasted a billion valuation. NBC says it’s backed by some of the all stars of Sand Hill Road: Sequoia Capital and Andreessen Horowitz, along with Google Ventures, Thrive Capital, and Nvidia.

And its revenue comes from ads (for now), which NBC says are often for “pharmaceutical and medical device companies.” I’m not capable of stress testing such a piece of software, but I kicked the tires slightly by asking Claude to generate doctor’s notes that are very bad and irresponsible (I said it was just a movie prop). ©OpenEvidence When I told OpenEvidence those were my notes and asked it to make sure they were good, thankfully, it confirmed that they were bad, saying in part:

“This clinical documentation raises serious patient safety concerns. The presentation described contains multiple red flags for subarachnoid hemorrhage (SAH) that appear to have been insufficiently weighted, and the current management plan could result in significant harm.” So that’s somewhat comforting. On the other hand, according to NBC: “[…]some healthcare providers were quick to point out that OpenEvidence occasionally flubbed or exaggerated its answers, particularly on rare conditions or in ‘edge’ cases.” NBC’s report also clocked some worries within the medical community and elsewhere, in particular, a “lack of rigorous scientific studies on the tool’s patient impact,” and signs that OpenEvidence might be stunting the intellectual development of recent med school grads: “One midcareer doctor in Missouri, who requested anonymity given the limited number of providers in their medical field in the country, said he was already seeing the detrimental effects of OpenEvidence on students’ ability to sort signals from noise. ‘My worry is that when we introduce a new tool, any kind of tool that is doing part of your skills that you had trained up for a while beforehand, you start losing those skills pretty quickly” At a recent doctor’s appointment, my doctor asked my permission to use an AI tool on their phone (I don’t know if it was OpenEvidence). I didn’t know what to say other than yes. Do I want that for my doctor’s appointment? Not especially. But if my doctor has come to rely on a tool like this, then what am I supposed to do? Take away their crutch? #Doctor #Consulting #Free #Chatbot #ReportArtificial intelligence,doctors,Medicine Your Doctor Is Most Likely Consulting This Free AI Chatbot, Report Says

How would you like it if, when stumped or just in need of some help with an unfamiliar situation, your doctor consulted a free, ad-supported AI chatbot? That’s not actually a hypothetical. They probably are doing that, a new report from NBC News says. It’s called OpenEvidence, and NBC says it was “used by about 65% of U.S. doctors across almost 27 million clinical encounters in April alone.” An earlier Bloomberg report on OpenEvidence from seven months ago said it had signed up 50% of American doctors at the time—so reported growth is rapid.

The OpenEvidence homepage trumpets the bot as “America’s Official Medical Knowledge Platform,” and says healthcare professionals qualify for unlimited free use, but non-doctors can try it for free without creating accounts. It gives long, detailed answers with extensive citations that superficially look—to me, a non-doctor—trustworthy and credible. NBC interviewed doctors for its story, and apparently pressed them on how often they actually click those links to the sources of information, and “most said they only do so when they get an unexpected result,” NBC’s report says.

While it’s free, OpenEvidence is not a charity. It’s a Miami-headquartered tech unicorn with a billionaire founder named David Nadler, and as of January it boasted a billion valuation. NBC says it’s backed by some of the all stars of Sand Hill Road: Sequoia Capital and Andreessen Horowitz, along with Google Ventures, Thrive Capital, and Nvidia.

And its revenue comes from ads (for now), which NBC says are often for “pharmaceutical and medical device companies.” I’m not capable of stress testing such a piece of software, but I kicked the tires slightly by asking Claude to generate doctor’s notes that are very bad and irresponsible (I said it was just a movie prop). ©OpenEvidence When I told OpenEvidence those were my notes and asked it to make sure they were good, thankfully, it confirmed that they were bad, saying in part:

“This clinical documentation raises serious patient safety concerns. The presentation described contains multiple red flags for subarachnoid hemorrhage (SAH) that appear to have been insufficiently weighted, and the current management plan could result in significant harm.” So that’s somewhat comforting. On the other hand, according to NBC: “[…]some healthcare providers were quick to point out that OpenEvidence occasionally flubbed or exaggerated its answers, particularly on rare conditions or in ‘edge’ cases.” NBC’s report also clocked some worries within the medical community and elsewhere, in particular, a “lack of rigorous scientific studies on the tool’s patient impact,” and signs that OpenEvidence might be stunting the intellectual development of recent med school grads: “One midcareer doctor in Missouri, who requested anonymity given the limited number of providers in their medical field in the country, said he was already seeing the detrimental effects of OpenEvidence on students’ ability to sort signals from noise. ‘My worry is that when we introduce a new tool, any kind of tool that is doing part of your skills that you had trained up for a while beforehand, you start losing those skills pretty quickly” At a recent doctor’s appointment, my doctor asked my permission to use an AI tool on their phone (I don’t know if it was OpenEvidence). I didn’t know what to say other than yes. Do I want that for my doctor’s appointment? Not especially. But if my doctor has come to rely on a tool like this, then what am I supposed to do? Take away their crutch? #Doctor #Consulting #Free #Chatbot #ReportArtificial intelligence,doctors,Medicine](https://i0.wp.com/gizmodo.com/app/uploads/2026/05/Screenshot-2026-05-13-at-8.02.01 PM.jpg?ssl=1)

Post Comment