Data removal services claim to remove your details from data broker databases, thereby limiting how often your information is bought and sold. They become increasingly popular as more people start to realize how widely their personal data is shared online. However, uncertainty is common. Do they work? Are they legitimate or just another online scam?

People want to know if these tools are actually effective and how they manage their data. Especially that results aren’t instant, and not every provider offers the same level of automation, coverage, or follow-up.

That brings us to Incogni, one of the most talked-about names in this industry. This in-depth review examines whether Incogni is good, how well it actually works, whether it’s trustworthy, and how it positions itself against the competition in 2026.

Incogni Overview (2026)

| Category | Details |

|---|---|

| Pricing | From $7.99/month when billed annually or from $15.98/month |

| Service type | Automated personal data removal |

| Coverage | 420+ data brokers (public and private listings) |

| Removal model | Legal opt-out and deletion requests |

| Follow-ups | Recurring cycles (60 days for public and 90 days for private listings) |

| Availability | The US, the UK, Canada, the EU, Switzerland, Norway, Iceland, Liechtenstein, the Isle of Man |

| Verification and recognition | Limited assurance assessment by Deloitte, Editors’ Choice Awards from PCMag and PCWorld |

| Free plan | No, but a 30-day money-back guarantee |

| Strengths | Full automation, broad data broker coverage, recurring removals, third-party verification (Deloitte), affordability |

| Limitations | No screenshots of removals, no exposure scan details, no free trial |

What Is Incogni?

Incogni is an automated personal data removal service. It’s designed to reduce your online availability by contacting data brokers and requesting the deletion of your personal information from their databases. This way, you don’t have to chase numerous companies individually. Incogni also keeps track of answers to the requests and sends follow-ups when needed.

Instead of tiresome and never-ending manual opt-outs, Incogni centralizes the process, operating under privacy laws like the GDPR and CCPA.

The provider offers the following features:

- Automated data removals: Incogni sends removal requests on your behalf to over 420 brokers (additional sites in higher-tier plans).

- Customer data removals: You can submit specific sites or data sources for additional takedown attempts (plan-dependent feature).

- Recurring removal cycles: Incogni automatically resends removal requests (every 60-90 days) to avoid relistings.

- Progress reports and tracking dashboard: The service offers real-time tracking of which brokers were contacted and how they responded.

- Family coverage: Specific plans allow adding multiple household members under one account for wider protection.

- Ongoing monitoring: Incogni doesn’t stop after one round of requests, maintaining broker coverage over time.

Its focus isn’t a one-time cleanup but ongoing data exposure management.

How Incogni Actually Works

Incogni’s process is built around automation and legal rights.

Step 1: Authorization

After you create an account, you need to verify your identity. This will allow Incogni to legally act on your behalf when contacting data brokers.

Step 2: Broker Outreach

Incogni starts sending deletion requests immediately. Its data broker list includes hundreds of brokers, both public and private listings.

Step 3: Tracking

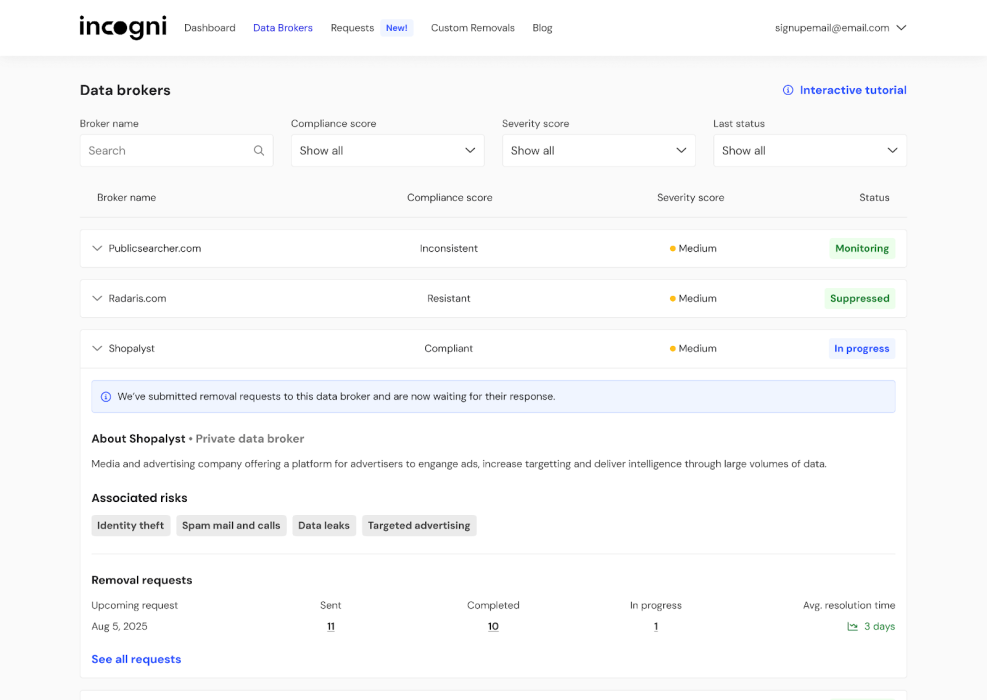

Incogni’s straightforward dashboard logs all the responses, confirmations, and pending cases, so you can (but you don’t have to) oversee the process.

Step 4: Recurring Removals

As data can easily reappear some time after its removal, Incogni submits requests on a cycle. Usually, it’s every 60 days for public brokers and 90 days for private brokers. This step is essential if you want to achieve long-term effectiveness.

This repeat system is what makes this different from one-time opt-outs.

How Long Do Removals Take?

Sadly, there’s no rule, as it depends on the legal response window and broker processes, and both can be rather lengthy.

Under privacy regulations, e.g., GDPR and CCPA, companies usually have weeks to respond to data removal requests. Some respond quickly; others require follow-ups; some even try to ignore requests. That’s why Incogni uses recurring cycles.

Broker databases refresh on a regular basis, which makes removals an ongoing process. Over time, repeated requests reduce reappearance. According to Deloitte, since 2022, Incogni has processed 245+ million requests.

Pricing

| Plan | Starting price for a month when billed annually | Starting price when paid monthly | Includes |

|---|---|---|---|

| Standard | $7.99 | $15.98 | 420+ broker removals, recurring cycles, dashboard tracking |

| Unlimited | $14.99 | $29.98 | Above + priority processing and unlimited custom removals |

| Family | $15.99 | $31.98 | Standard coverage for multiple household members |

| Family Unlimited | $22.99 | $45.98 | All features listed above + family coverage |

Incogni is also included in broader privacy bundles: Surfshark One+ plan has Incogni alongside Surfshark’s VPN and other security tools. Moreover, there’s a bundle (Data removal & identity-theft protection, all-in-one) with Nord Protect, but it’s for US users only.

Customer Support

Incogni’s customer support is handled by its Help Center and ticket-based system. Users can easily access helpful guides and FAQs as well as submit requests for assistance. Incogni’s customer support team is known to respond quickly and to the point.

Email-style case support is the main channel. Live chat is available, while phone support comes with Unlimited plans only, giving higher-tier subscribers a more direct contact option.

User Experience

Incogni is designed to be low-maintenance, perfect for people who don’t want to bother doing it manually, as it can easily become a full-time job. As such, setup typically takes only minutes, and the service starts operating in the background. The dashboard is the main control center. It shows contacted brokers, confirmations, and pending requests or required follow-ups.

There are no spreadsheets to manage, no legal templates to send manually, and no need to track the deadlines or reappearances. Incogni was built to operate mostly in the background. So the interface focuses on visibility rather than technical complex details.

In short, Incogni provides transparency and visibility without requiring users to actively manage each step of the process.

Is Incogni Legit or a Scam?

Incogni is not a scam. Several verifiable signals from reputable sources confirm Incogni’s legitimacy.

Independent Limited Assessment

A limited assurance report from Deloitte examined Incogni’s removal process and concluded that it works as promised. As of now, this type of third-party assessment is pretty exceptional in the data removal industry, and external validation is essential when you want to entrust a provider with sensitive information.

Scale and Transparency

Deloitte also confirmed that Incogni’s claims that they have already processed hundreds of millions of removal requests for its customers is 100% true. The provider keeps documentation about how each request is sent and tracked. Moreover, its removal model relies on formal privacy-law opt-out requests, not simply informal takedown attempts.

Expert Recognition

Incogni has received Editors’ Choice Awards from both PCMag and PCWorld. Accompanying reviews praise the provider’s reliable performance, strong automation, broad data broker coverage, and transparency. They also highlight the handling of ongoing removal requests that require minimal effort from the user.

Public User Feedback

Incogni holds a rating of 4.4 on Trustpilot based on over 2,400 reviews. Most frequent positive mentions include:

- easy setup and automation,

- clear visibility and transparency about the removal progress,

- noticeable reductions in unsolicited messages and calls over time.

Some critical notes refer to how long it takes to see results and the fact that no service can truly guarantee complete data disappearance. However, that can be expected in this industry, and no provider, including Incogni, promises 100% successful removals.

Overall sentiment confirms that the service works as described, given realistic expectations.

Final Verdict: Incogni Is a Practical Tool for Ongoing Data Exposure Reduction

Incogni is an ongoing data removal service, not a one-time fix solution. It automates legally-backed opt-out requests to 420+ data brokers. Then, it repeats them on a schedule as needed and provides users with clear reports.

Independent assessment, editorial recognition, and positive user feedback confirm that the service works in a structured and reliable way.

Users need to know, though, that the results develop gradually – legal response takes time, and many broker databases constantly refresh. In that context, Incogni appears as an excellent choice, a long-term privacy management tool focused on steadily reducing how broadly your personal data circulates online.

FAQ

Yes, users typically report a significant decrease in marketing calls and emails as the service utilizes legal erasure requests to force data brokers to delete your information.

While initial requests are generally dispatched within 24 hours, brokers often take 30 to 45 days to comply. Incogni continues to monitor these brokers and resends requests if they do not respond.

To identify your records accurately, Incogni requires your full name, email address, and physical address. You can provide multiple variations of these details to ensure older or alternate profiles are caught.

Yes, Incogni offers an Android app for mobile management, though its full suite of features is primarily accessible through a standard web browser.

If your Incogni service is part of a package like Surfshark One+, you must manage the cancellation through that specific provider’s billing department.

Source link

#Incogni #Review #Data #Removal #Service #Work

Post Comment